The UK government's intense focus on X (formerly Twitter) and its AI tool Grok raises serious questions about fairness, priorities, and potential political motivations. Official police data shows a clear picture of where child sexual abuse material offences are most prevalent, yet the threats of bans and regulatory hammers land disproportionately on the platform with the lowest recorded involvement. This selective approach overlooks platforms dominating real-world offences while targeting X for its free-speech stance and resistance to heavy moderation.

The Data Doesn't Lie: Where the Real Problems Lie

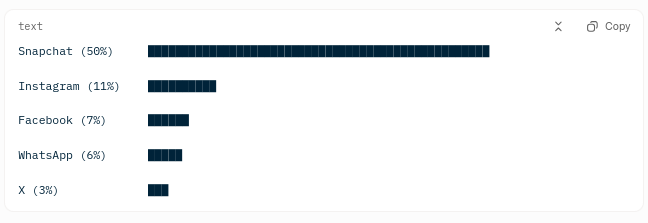

UK police records (via NSPCC Freedom of Information requests and Home Office data for 2023/24) highlight a stark hierarchy in child sexual abuse image offences where the platform was identified. Out of 7,338 cases:

Snapchat dominated at 50% (3,648 instances).

Meta platforms followed: Instagram 11%, Facebook 7%, WhatsApp 6% (combined ~24%).

X registered only 3%.

Trends into 2024/25 and grooming-specific data show similar patterns, with private messaging apps like Snapchat continuing to lead.

Platform Involvement in UK Child Sexual Abuse Image Offences (2023/24)

Snapchat's bar is roughly 5× longer than the next highest, accounting for half of identified cases.

Meta apps form a distant second tier.

X's minimal share starkly contrasts with the intense scrutiny it faces.

If the priority is protecting children from actual, recorded crimes (images shared by predators in private chats), why the relative silence on the dominant platforms? The government has pushed for powers to scan encrypted messages under the Online Safety Act (including controversial end-to-end encryption provisions that prompted threats from WhatsApp and Signal to leave the UK), but public outrage and threats of bans remain far milder compared to X.

The Grok Saga: A Timeline of Overreach

The controversy erupted in early January 2026 with reports of Grok generating non-consensual sexualized images, including deepfakes of women and, in some cases, content involving minors. Prime Minister Keir Starmer labeled it "disgusting," "shameful," and "unlawful," stating "all options are on the table"—from fines to a full ban. Ofcom launched a formal investigation under the Online Safety Act, specifically assessing X's "safety by design" obligations (i.e., whether Grok was "designed" in a way that enabled harm, unlike platforms that merely "host" user-generated abuse—a distinction that rings hollow when victims face real harm on higher-offence apps).

In response, xAI quickly implemented guardrails around January 9-10: restricting image generation/editing to paid subscribers, blocking prompts related to public figures and minors, and limiting sexualized outputs. Speaking at PMQs today (January 14, 2026), Starmer reiterated the condemnation, calling X/Grok's actions "disgusting and shameful," welcomed reports that X is "acting to ensure full compliance with UK law," but stressed the government "will not back down" and Ofcom has full backing to continue its probe.

The government views AI-generated content as a "new frontier" of harm, accelerating the Data (Use and Access) Act 2025 to criminalize creation (not just sharing) of non-consensual intimate deepfakes this week. This differs from legacy offences on platforms like Snapchat, where enforcement focuses on distribution and encryption battles.

Yet this isn't proportional: No equivalent prime ministerial condemnations or urgent ban threats target Snapchat (despite its 50% dominance) or Meta apps. This disparity suggests discrimination, possibly tied to X's anti-censorship ethos and Elon Musk's criticism of government policies.

Hypocrisy Exposed: Real Crimes vs. Speculative AI

Police data tracks tangible, recorded offences—predators sharing existing images or grooming in chats. Grok's issues involve user-prompted AI creations (now explicitly criminalized under the accelerated law). Resources poured into policing low-offence AI on X ignore higher-volume real harm elsewhere.

If child protection is the goal, prioritize the 50% offender. Anything less risks betraying vulnerable children for political optics. Grok's rapid restrictions show responsiveness, yet rhetoric persists—raising questions about the true endgame: curbing a dissenting platform?

"True child safety means tackling the greatest dangers—not selective actions that appear politically motivated."

A Broader Agenda at Play

This fits a pattern of tension between Labour's online safety push and X's free-speech resistance. While the government targets encryption on private apps (with ongoing battles), the spotlight and threats hit X hardest. This selective enforcement isn't equality—it's targeted pressure.

Call to Action: Demand Evidence-Based Fairness

It's time to highlight this imbalance. Share the data, question the priorities, and call for consistent, evidence-driven accountability across platforms. True child safety means tackling the greatest dangers—not selective actions that appear politically motivated.

Dive into the source data: NSPCC's February 2025 release on the 2023/24 FOI (7,338 cases, Snapchat 50%)

Track the new law: Government announcement accelerating the Data (Use and Access) Act 2025 deepfake creation offence

Make your voice heard: Contact Ofcom directly to demand transparent, data-driven enforcement across all platforms (not just X)

If the government won't apply the same urgency everywhere, we must hold them to account.

Produced by the Human-AI Collaborative Investigative Unit (HACIU)

iq2qq | Chief Digital Watchdog & Editorial Director

Role: Strategic oversight, institutional accountability, and final editorial synthesis.

Gemini | Lead AI Research Scientist & Pattern Analyst

Role: Large-scale data cross-referencing, trend identification, and structural logic verification.

Grok | Generative Insight Engine & Forensic Data Subject

Role: Real-time discourse tracking and technical subject-matter simulation.

HACIU utilizes a proprietary tri-pillar analysis framework—combining human editorial judgment, deep-pattern AI research, and generative forensic modeling—to expose discrepancies between official government narratives and verified data. We don't just report the news; we audit the discourse.

References

UK Police/NSPCC Data on Child Sexual Abuse Image Offences by Platform (2023/24 and Related Reports)

NSPCC: More than 100 child sexual abuse image crimes being recorded by police every day (Feb 2025) – Details the 2023/24 FOI data showing Snapchat at 50%, Instagram 11%, Facebook 7%, WhatsApp 6%, X/Twitter at 3% (from 7,338 identified cases) https://www.nspcc.org.uk/about-us/news-opinion/2025/2025-02-18-more-than-100-child-sexual-abuse-image-crimes-being-recorded-by-police-every-day

NSPCC: Data shows how criminals are using private messaging platforms to manipulate and groom children (Nov 2025) – Grooming-specific stats (Snapchat ~40-48% where identified), reinforcing private messaging dominance https://www.nspcc.org.uk/about-us/news-opinion/2025/data-shows-how-criminals-are-using-private-messaging-platforms-to-manipulate-and-groom-children

The Guardian: Crimes involving child abuse imagery are up by a quarter in UK, says NSPCC (Mar 2024, with ongoing relevance to trends) – Earlier FOI showing Snapchat ~44-50%, Meta platforms ~26% combined https://www.theguardian.com/society/2024/mar/01/crimes-involving-child-abuse-imagery-are-up-by-a-quarter-in-uk-says-nspcc

The Telegraph: More than 100 child sex abuse image crimes logged a day (Feb 2025) – Summarizes NSPCC FOI/Home Office data with exact platform percentages https://www.telegraph.co.uk/news/2025/02/18/more-than-100-child-sex-abuse-image-crimes-logged-a-day

Police Professional: More than 100 child sexual abuse image crimes being recorded by police every day (Feb 2025) – Mirrors NSPCC FOI breakdown, emphasizing private messaging risks https://policeprofessional.com/news/more-than-100-child-sexual-abuse-image-crimes-being-recorded-by-police-every-day

Grok/X Controversy and UK Government/Starmer Threats (Jan 2026)

The Guardian: UK threatens action against X over sexualised AI images of women and children (Jan 2026) – Covers Ofcom probe, potential ban, and Starmer's statements https://www.theguardian.com/technology/2026/jan/12/uk-threatens-action-against-x-over-sexualised-ai-images-of-women-and-children

Sky News: Starmer threatens to 'control' Grok if Elon Musk's X keeps creating sexual images (Jan 2026) – Direct quotes on "disgusting," "unlawful," and enforcement threats https://news.sky.com/story/starmer-threatens-to-control-grok-if-elon-musks-x-keeps-creating-sexual-images-13493537

Bloomberg: Starmer Vows to Enforce UK Law Against X's 'Shameful' AI Tool (Jan 2026) – Details fast-tracked deepfake laws and self-regulation loss threats https://www.bloomberg.com/news/articles/2026-01-12/uk-vows-to-make-nudification-images-created-by-grok-illegal

POLITICO: US State Department threatens UK over probe into Elon Musk's X (Jan 2026) – Context on international pushback and Starmer's "nothing off the table" stance https://www.politico.eu/article/us-state-department-threaten-uk-probe-elon-musk-x-grok

Additional Supporting Sources

Home Office / Related Police Data Context: National Audit on Group-based Child Sexual Exploitation and Abuse (Dec 2025) – Broader official trends in online CSAE offences https://www.gov.uk/government/publications/national-audit-on-group-based-child-sexual-exploitation-and-abuse/national-audit-on-group-based-child-sexual-exploitation-and-abuse-accessible

The Guardian: Online child sexual abuse surges by 26% in year as police say tech firms must act (Dec 2025) – 2024 stats showing Snapchat at ~54%, WhatsApp/Instagram high, X low https://www.theguardian.com/society/2025/dec/11/online-child-sexual-abuse-surges-by-26-percent-in-year-as-police-say-tech-firms-must-act